There’s a tool making the rounds in CPG packaging circles that promises to tell you exactly where shoppers will look on your package, instantly, affordably, and without recruiting a single real person. In the debate between AI heatmaps vs. eye tracking, AI-generated heatmaps have become the shiny new object of packaging research. And I get it. The pitch is compelling, especially as other AI tools are increasing in popularity.

But after years of running in-person and webcam-based eye tracking studies for CPG brands, and working directly with Tobii, one of the largest eye tracking technology companies in the world, I want to be honest with you about what these tools actually are, what they’re actually built on, and what they fundamentally cannot see.

What AI Heatmaps Actually Are And Why They’re Not Eye Tracking

Let’s start with the terminology problem. These tools are called “AI heatmaps,” which implies they work like eye tracking. They don’t.

They are saliency models, which means they are algorithms trained to predict which visual elements are likely to capture bottom-up, involuntary attention based on low-level image properties. The model looks at your package or your shelf and identifies areas of high contrast, warm color, prominent faces, large text, and central positioning. Then it generates a heat map based on those predictions.

The output looks familiar. It feels scientific. But it does not measure how a shopper’s eyes actually moved. It is predicting which parts of an image look visually prominent, which is a very different thing.

The Sample Size Claim Deserves Scrutiny

Most AI heatmap tools make their case on data volume. You’ll see claims like “trained on 50,000 eye tracking studies” or “validated against millions of data points.” These numbers are meant to impress you, and they work.

Here’s what they don’t tell you.

I work directly with Tobii, a company that has collected more practical eye-tracking data than almost anyone on the planet. And even Tobii’s datasets (when you get into the specifics) are not concentrated in CPG packaging contexts. That data is distributed across a wide range of use cases: website UX testing, store layout and fixture design, UI/UX research, academic vision science studies, automotive interfaces, and more.

When an AI heatmap company claims a massive training dataset, the honest question to ask is: how much of that data was collected in a CPG packaging context, on shelf, with shoppers in an actual purchase mindset?

The answer, in virtually every case, is a small fraction of what they’re implying. What they’ve actually built is a general visual attention model and applied it to packaging. That’s a significant leap, and it matters more than most people realize.

What Saliency Models Detect vs. What Shoppers Actually Do

Saliency models are built to track the kind of attention you can’t really control. In other words, the stuff that just grabs your eyes without you thinking about it. A flash of high contrast. A face. Big, bold text. Your brain notices these things automatically, and saliency models are pretty decent at predicting when that’s going to happen.

The problem is that shopping is not a passive visual experience. It is a goal-directed task.

When a shopper stands in front of a refrigerated beverage section looking for a low-sugar energy drink in a specific flavor, their eyes are not wandering freely across the shelf in response to whatever is visually loudest. They are running a search. They are applying brand recognition heuristics. They are filtering by color cues they’ve learned to associate with the category. They are sometimes reading and sometimes skimming. And they are doing all of this in one to three seconds before making a decision, reaching for something, or moving on.

This is called top-down attention, and it is driven by intent, familiarity, and task, not by which element on the package has the most visual contrast. Saliency models cannot measure it. They don’t have a mechanism for it. They have no way of knowing whether a shopper is brand loyal, category novice, or in a rush. They cannot distinguish between a shopper who looked at your logo because they recognized it and a shopper who looked at it because it happened to be bright green.

The Clean Heatmap Problem

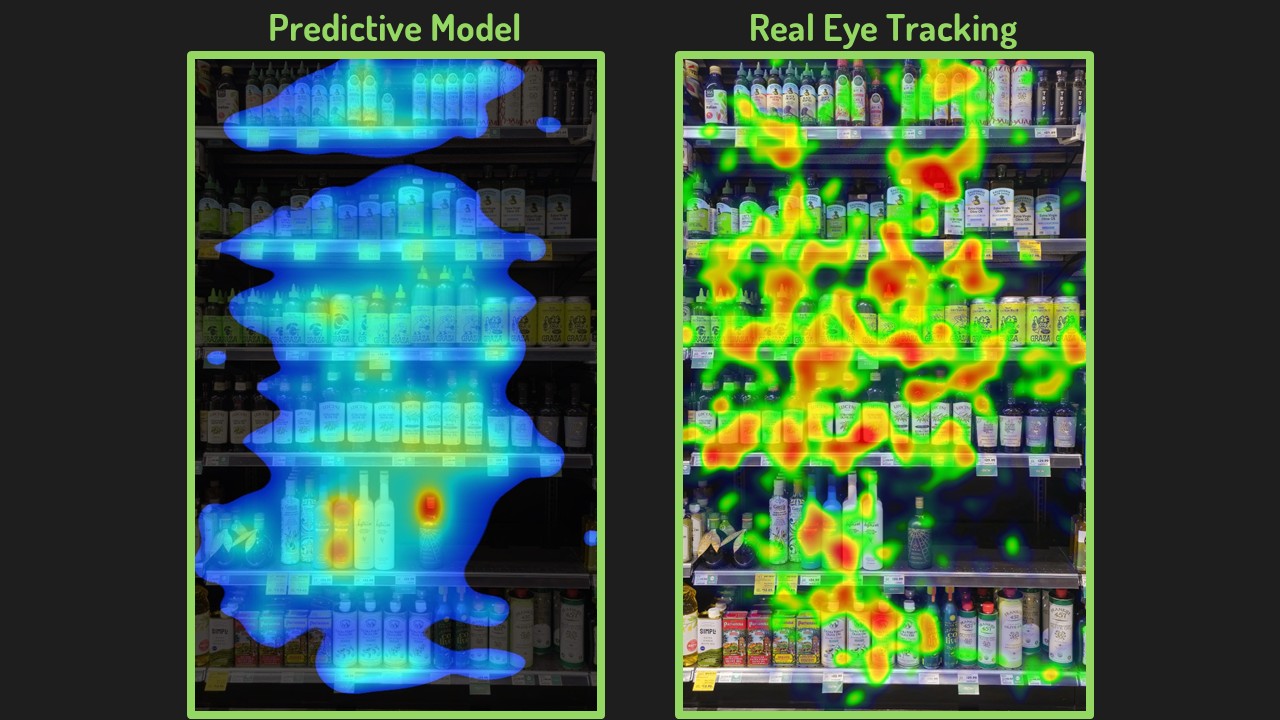

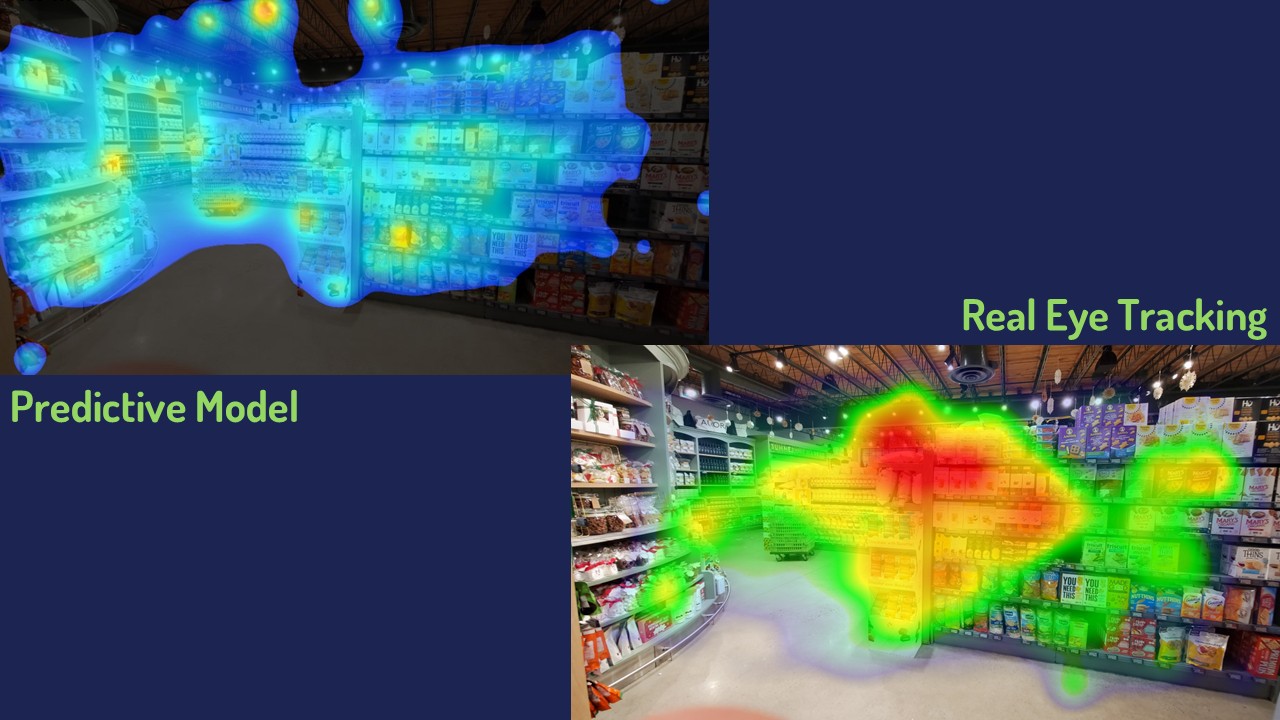

Here’s something I’ve noticed that doesn’t get talked about enough: AI-generated heatmaps tend to look suspiciously tidy.

In a real eye tracking study, the aggregate heatmap across even 50 or 60 participants is messy. Attention is distributed unevenly. Some elements draw a lot of fixations. Others that you’d expect to perform well get almost none. There are dead zones in places that shouldn’t be dead zones. The data tells a story that surprises you, and that surprise is the point.

AI heatmaps tend to spread activation fairly evenly across the package, weighted toward the most visually prominent features. They rarely produce the sharp, counterintuitive results that make real eye tracking data actionable. When everything looks moderately warm, you don’t actually know what to change.

Real data is honest in a way that model outputs often aren’t. The messiness is the signal.

What Webcam Eye Tracking Captures That AI Heatmaps Cannot

When I run a webcam eye tracking study for a CPG brand, here is what the data can tell us that no saliency model can approximate:

Viewing order. Saliency models produce a static prediction, which is a snapshot of where attention is likely to land. There is no concept of sequence. Real eye tracking captures a full scan path: what shoppers saw first, what they looked at next, and how attention moved across the package or shelf over time. For CPG brands, this matters enormously. If your brand name registers after your competitor’s, or your key claim is fifth in the visual sequence rather than second, that’s an easily addressed strategic problem a heatmap won’t show you.

Shelf context. When we test a package surrounded by competitors in a simulated shelf environment, attention patterns change substantially compared to testing in isolation. A product that performs beautifully on its own can disappear entirely on a crowded shelf. AI heatmaps typically test in isolation. Shelf studies require real participants and real visual competition.

Brand familiarity effects. Regular buyers of a brand tend to look at it very differently from first-time shoppers. Loyal buyers often don’t look at the package much at all; they recognize it peripherally and reach. New shoppers scan more deliberately. Both patterns show up in real data. A saliency model generates one prediction that applies to no one in particular. It’s worth noting that this problem runs deeper than it first appears: most predictive models are trained on participant data drawn heavily from academic research populations, psychology students, and lab volunteers who may bear little resemblance to the shoppers standing in your aisle.¹

Purchase intent and task framing. When we brief participants with a specific finding task, “you’re looking for a single-serve pasta sauce that includes protein,” their attention distribution changes. Real studies can replicate real shopping conditions. AI models cannot be briefed.

Outlier behavior. Heatmaps, by design, show you the average. Responses that deviate from the norm get smoothed out or excluded entirely. But in CPG research, outlier behavior is often where the most important findings live — the shopper who completely missed your redesigned logo, the segment that fixated on a claims hierarchy you assumed was secondary. Real eye tracking preserves these individual scan paths. A predictive model, trained on generalized populations, will never surface what it wasn’t built to see. As Dr. Tim Holmes of Tobii puts it, a reliance on general population-based predictive attention algorithms removes any potential for learning from outlier behavior.¹

When AI Heatmaps Might Be Useful

I want to be fair here. Saliency models are far from useless.

If you’re a designer early in concepting and you want a fast gut-check on whether a particular element is likely to disappear visually: too low contrast, too small, too peripheral, an AI heatmap can absolutely surface that quickly. It’s a faster version of asking a design colleague to squint at something.

For broad visual hierarchy questions on a single design in isolation, they offer a reasonable directional signal at low cost.

But if your question is: will shoppers notice this on the shelf, understand what it means, and be more likely to pick it up, those are not questions a saliency model is programmed to answer. That requires real people, in a real context, with real purchase intent.

The Stakes Are Higher Than They Look

CPG packaging decisions aren’t cheap to reverse. A redesign that goes to market and underperforms costs far more than the research that could have caught the problem before production.

The appeal of AI heatmaps is real: they’re fast, they’re inexpensive, and they produce outputs that look like data. But looking like data and being data are different things. When the output is generated by a model trained predominantly on non-CPG contexts like websites, UI, and academic stimuli in a controlled environment. Then, applied to a shelf environment it has never encountered, the confidence interval on that prediction is far wider than the tool suggests.

You don’t have to take my word for it. Ask any AI heatmap provider how much of their training data came from CPG packaging studies, conducted on the shelf, with actual shoppers in a purchase context. Then compare that answer to what they’re claiming. I know what’s involved in those studies, because I’ve been involved in hundreds of studies involving tens of thousands of respondents.

The Bottom Line

AI heatmaps are a saliency prediction tool dressed in eye-tracking language. They are useful in limited, early-stage contexts where speed matters more than behavioral results. They are not a substitute for measuring how real shoppers actually look at real packaging under real shopping conditions.

Webcam eye tracking isn’t perfect either; no research methodology is. But it produces genuine behavioural data from real people, in conditions you control and design to match the actual purchase environment. That data is messy, sometimes counterintuitive, and almost always more useful than a clean model output.

If you’re making packaging decisions that matter, the signal you need is in the mess.

Rich Insights Research specializes in webcam-based eye tracking for CPG package design testing. If you want to understand how your packaging actually performs on the shelf, not how a model predicts it might, please book a free discovery call.