If you’ve ever wondered whether eye-tracking is more than just colorful heat maps, here’s what the data actually tells you and why it matters for your packaging.

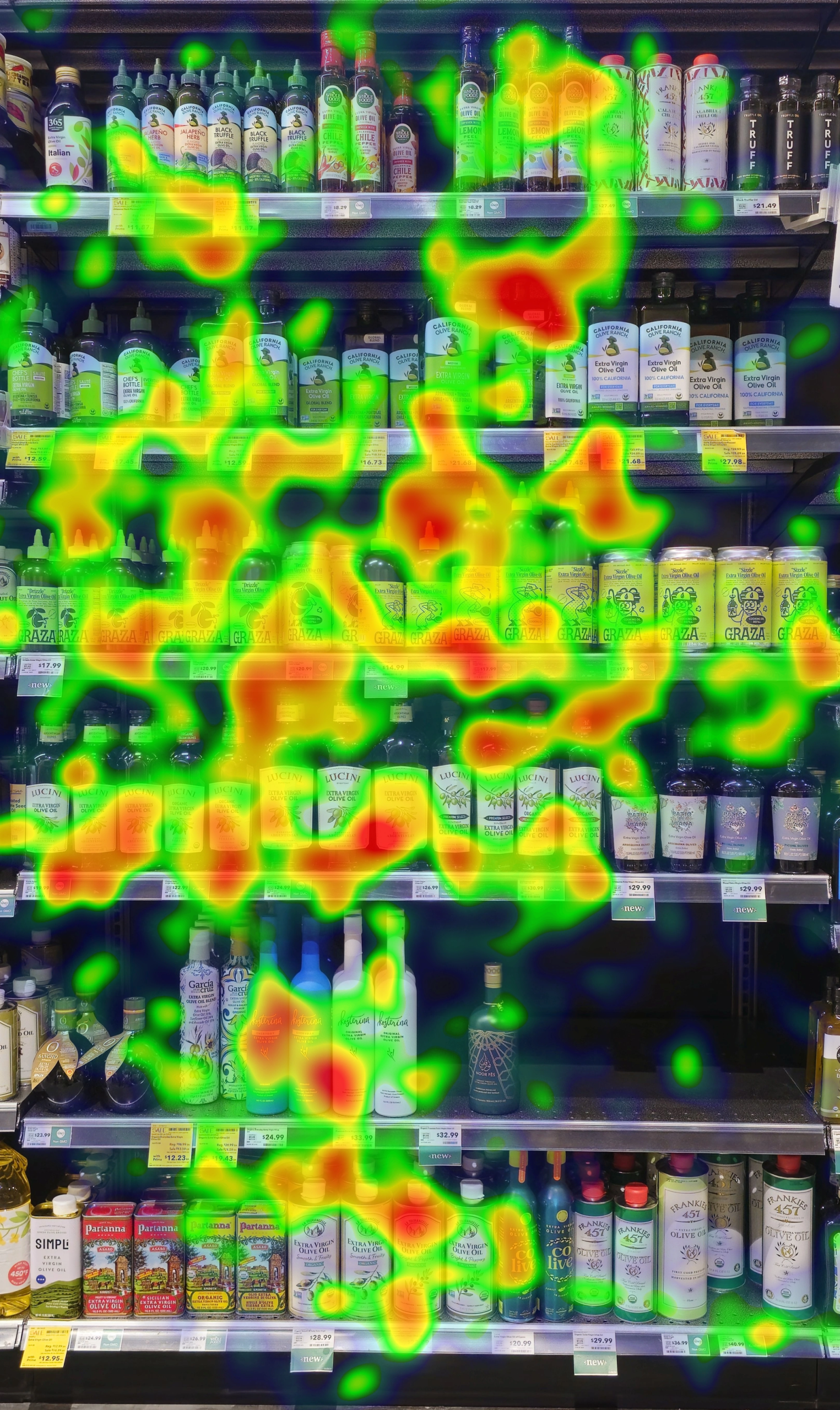

Eye tracking packaging design studies have become one of the most powerful tools in the CPG brand toolkit, but they’re still widely misunderstood or completely unknown. Many people have seen the heat maps glowing blobs of red and yellow over a package or shelf, but the meaningful data goes much deeper than that. To be honest, heatmaps are one of the least useful tools by themselves. A well-designed study produces a layered picture of how shoppers actually experience your product: how quickly it stands out, how well they understand it, and whether it communicates the right things before a decision is made.

Here’s a breakdown of what a full eye-tracking study actually measures, how the entire process works together to provide a well-rounded understanding of how your package performs.

How Eye Tracking Packaging Design Studies Work

A typical eye-tracking study for CPG packaging runs participants through four sequential exercises:

1. Recruitment & Calibration Participants are recruited from a consumer panel that matches your target shopper. Because modern webcam-based eye tracking is done entirely online, you can reach hundreds of respondents without a lab or in-person session. Each participant completes a calibration exercise before any stimulus is shown, ensuring the data is accurate and usable.

2. The Shopping Exercise Shoppers are shown a shelf image, typically a current retailer set, or one your brand is targeting, and asked to shop it naturally. They select products they’d be interested in purchasing. This is the most naturalistic part of the study; it mimics the actual retail scenario without being in a store.

3. Findability Tasks Respondents are asked to find a specific product on the shelf as quickly as they can. These tasks are timed and benchmarked either against competitive products or across multiple design iterations of your own lineup.

4. Individual Pack Viewing Each product is shown in isolation for 2–3 seconds, replicating the brief moment of attention a package actually receives on a real shelf.

5. Survey Questions The study closes with a questionnaire that explores decision-making, purchase drivers, benefit perception, and what the design did or didn’t communicate.

What Eye Tracking Packaging Design Tells You About Shelf Performance

The shopping exercise is where you learn how your brand performs in context. Eye-tracking software maps where every participant looked, in what order, and for how long. This produces a set of metrics that describe the macro-level dynamics of your packaging on the shelf.

Standout (% Noticed and Time to Notice): What percentage of shoppers had at least one fixation on your product, and how many seconds it took before they noticed it. This is the fundamental attention metric. A product that stands out quickly and reliably is far more likely to enter a shopper’s consideration set. These are arguably the most important metrics when evaluating a design.

Engagement Time: How long shoppers spent looking at your product once they noticed it. Standout gets you seen; engagement means they paused. High engagement combined with high purchase rates is a strong signal of effective design. High engagement with low purchase rates is a sign of a confusing design.

% of Purchases and Time to Purchase: What share of shoppers ultimately selected your product, and how long the decision took. This is where you can detect a disconnect. A design might earn the highest engagement on the shelf but still underperform on purchases, which is a clear signal that something in the communication isn’t converting interest into intent.

Seen Order: Which brands are noticed first, and in what sequence shoppers move through the rest of the shelf? This tells you whether shoppers are noticing your products ahead of others on the shelf.

Together, these metrics paint a picture of your design’s macro effectiveness: how well it attracts attention, holds it, and converts it into action.

What Findability Tells You: Flavor and Benefit Communication

The findability seeks to answer the question: Can shoppers actually find what they’re looking for on your packaging?

Participants are given a task, “Find the Lemon flavor,” or “Find the product with the most protein,” and the time it takes them to do so is recorded. This creates a performance benchmark that can be used in two different ways:

Within your own lineup: When your SKU lineup is tested together, findability tasks reveal whether your flavor cues, color coding, or naming architecture is working. If shoppers take significantly longer to find a specific variety, or are less accurately selecting, that’s a design problem; they’re not processing the differentiation cues quickly enough. This is especially important for brands with large flavor assortments.

Against competitive products: When your products are placed alongside competitors, findability tasks reveal how well your category cues communicate relative to others. If shoppers find a competitor’s benefit faster than yours, the data tells you why and usually it’s because something in the visual hierarchy is working against you.

Findability benchmarks vary by shelf complexity, but faster and more accurate selection is always better.

What Eye Tracking Packaging Design Tells You About Individual Pack Understanding

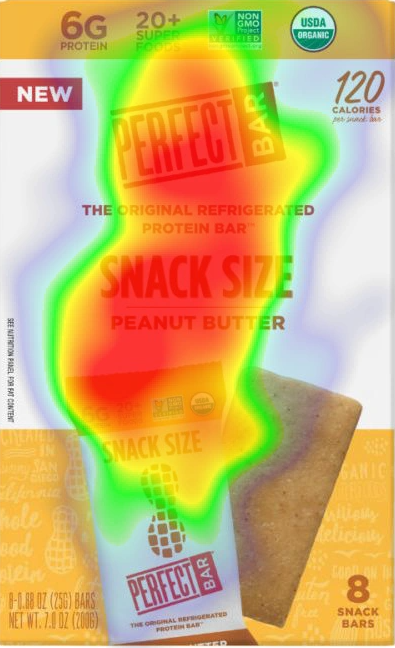

Showing your product in isolation for 2–3 seconds is meant to replicate the brief window of attention that packaging actually receives. It’s a quick glance on a busy shelf. And the data from this exercise is about comprehension, not just attention.

The eye-tracking data shows exactly which elements they looked at during those seconds and in what order, so you can map what they saw against what your brand’s intention is. You can effectively match the eye tracking hierarchy with the shopper decision hierarchy to see if there are any glaring differences or gaps.

This is where you learn whether your hierarchy is working. If 70% of shoppers fixated on your brand name but only 30% noticed your key benefit claim, there’s a structural issue in the design. If the flavor image attracted limited attention and flavor is a main driver of purchase in your category, that’s an issue.

The Questions: What Behavior Alone Can’t Tell You

Eye-tracking is a behavioral measure. It tells you what happened, where people looked, for how long, and in what order. But it doesn’t tell you why they felt pulled to pick something up, or what belief about your product got in the way.

A simple questionnaire adds that layer. It explores what benefits feel most relevant in the category, which claims feel credible or confusing, and what emotional or rational triggers drive purchase. Used alongside the behavioral data, the survey answers the question eye-tracking leaves open: what does the brand need to communicate, and is it doing that?

Why Eye Tracking Packaging Design Matters for Your Brand

Packaging decisions are high-stakes and expensive to reverse. An eye-tracking study run online, with a sample of real shoppers, against an accurate shelf set can provide behavioral evidence to make design decisions with confidence. It goes much deeper than whether the design looks good, but dives into whether it works: does it stand out, does it communicate, does it convert?

That’s what the data actually tells you. And increasingly, it’s the standard that best-in-class CPG brands hold their packaging to before a single unit goes to retail.

Rich Insights is a consumer research company specializing in eye-tracking studies for CPG brands. We use webcam-based eye tracking to test packaging and shelf performance with real shoppers remotely, at scale, and without a lab.